With private company defaults surging past 9.2% — the highest level seen in years — venture capital firm Lux Capital recently urged AI-focused companies to secure written agreements for their compute capacity. In a turbulent financial climate affecting the AI supply chain, Lux cautioned that verbal agreements or handshake deals might prove unreliable.

However, an alternative is gaining traction: bypassing external compute infrastructure entirely. Smaller AI models, designed to run directly on user devices without the need for data centers, cloud providers, or third-party dependencies, are now reaching a level of sophistication that makes them a viable option. Stepping into this space with growing momentum is the Spanish startup Multiverse Computing.

While staying relatively under the radar compared to some industry heavyweights, Multiverse is beginning to stand out as demand grows for efficient and streamlined AI solutions. The company has specialized in compressing AI models from leading names such as OpenAI, Meta, DeepSeek, and Mistral AI.

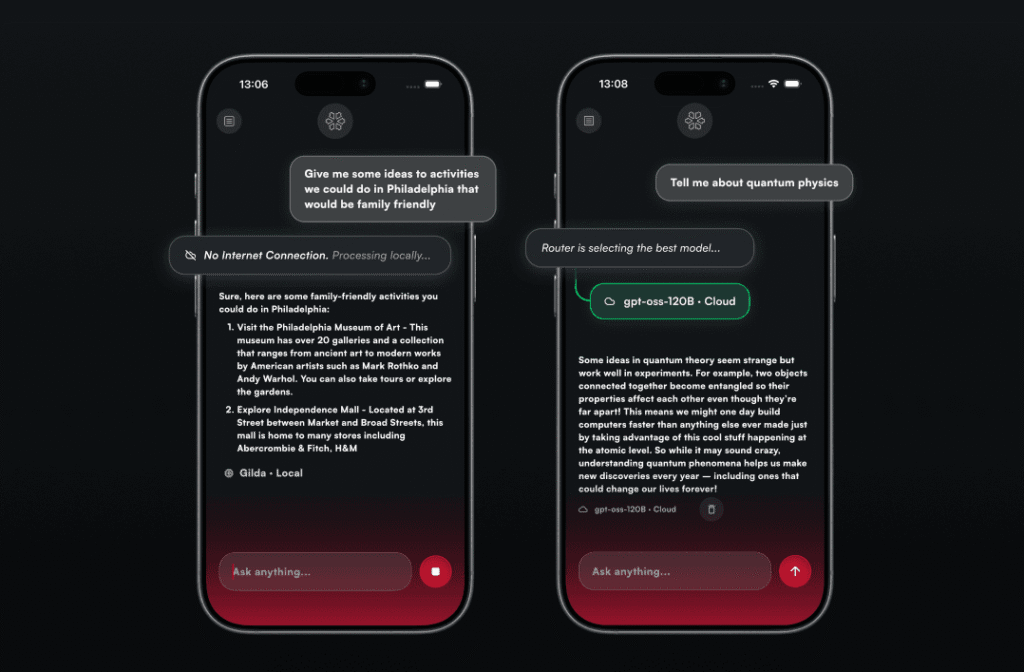

To showcase its innovation, Multiverse has launched both an application and an API portal. Together, these tools give developers and users direct access to its compressed models, broadening their usability. The centerpiece of this effort is the CompactifAI app, a chat-based AI tool similar to ChatGPT or Mistral’s Le Chat. Users can pose questions and receive responses in real time. The distinct innovation lies in CompactifAI’s integration of a model called Gilda — a remarkably compact AI capable of operating locally and offline on users’ devices. Multiverse claims Gilda’s size and efficiency are key differentiators. For end-users, this means experiencing “AI on the edge,” where their data stays private, never leaving the device, and no active internet connection is required for operation. However, there’s an important caveat: the app demands a device with sufficient RAM and storage. On older smartphones, such as legacy iPhone models, these constraints can force a fallback to cloud-based processing via Multiverse’s API.

This fallback is managed by an automated routing system named Ash Nazg — a cheeky reference to Tolkien’s “One Ring” inscription from *The Lord of the Rings*. Yet, when cloud processing kicks in, it dilutes the privacy-first appeal of the app — one of its most compelling features. These tradeoffs currently limit CompactifAI’s potential for mass consumer adoption. According to Sensor Tower data, the app registered fewer than 5,000 downloads in the past month. However, wide-scale adoption might never have been Multiverse’s primary focus. Instead, the company is targeting business clients. Its newly launched self-service API portal now allows developers and enterprises to directly integrate its compressed models into custom applications without needing to go through third-party platforms like AWS Marketplace. By prioritizing simplicity and accessibility for businesses, Multiverse is laying down a strategic path to carve out its niche in the evolving AI landscape

The CompactifAI API portal now provides developers with direct access to compressed models, offering the transparency and control necessary to implement them effectively in production environments, according to CEO Enrique Lizaso. A standout feature of this API is real-time usage monitoring, which aligns with a growing trend among enterprises to explore alternatives to large language models (LLMs).

Key motivators include the appeal of deploying models on edge devices and achieving reductions in compute costs. Additionally, smaller models are no longer as constrained in capability as they once were. Earlier this week, Mistral introduced the latest addition to its small model lineup, Mistral Small 4. This model has been tailored for a range of applications, including general conversation, coding, reasoning tasks, and autonomous agentic functions.

The company also unveiled Forge, an innovative system enabling enterprises to design custom models—particularly smaller ones—while allowing them to fine-tune trade-offs best suited to their specific use cases. Recent advancements from Multiverse further illustrate the narrowing performance gap between smaller models and LLMs.

For example, its new compressed model, HyperNova 60B 2602, is constructed from gpt-oss-120b, an OpenAI model whose source code is open for public use. Multiverse asserts that HyperNova delivers quicker and more cost-efficient responses than its predecessor, a critical advantage for agentic coding workflows where AI handles intricate, multi-step programming tasks autonomously. Adapting models for mobile devices while maintaining their utility remains a significant hurdle.

Apple Intelligence has tackled this challenge by integrating an on-device model with cloud-based functionalities. Similarly, Multiverse’s CompactifAI app allows users to route queries through the gpt-oss-120b API. However, its primary objective is to emphasize the benefits of local models like Gilda and its successors, which extend beyond just cost savings. For professionals working in sectors demanding high levels of privacy and reliability, the ability to run models locally without relying on cloud connectivity is invaluable.

Beyond these technical advantages, small localized models open up transformative business opportunities—for example, embedding AI within drones, satellites, or other devices operating in connectivity-limited environments. Currently supporting over 100 global clients—including notable names such as the Bank of Canada, Bosch, and Iberdrola—Multiverse is well-positioned for further growth. Following its successful $215 million Series B funding round last year, the company is reportedly preparing to raise an additional €500 million at a valuation exceeding €1.5 billion.